Anthropic draws a hard line on AI ethics

One of the main stories this past week has been the clash between Anthropic, the maker of the Claude model, and the Trump administration.

Anthropic has found itself at the centre of a highprofile dispute with the US government after refusing to remove ethical guardrails around the military use of Claude. The company has been clear about its two red lines: Claude should not be used for mass domestic surveillance, or for fully autonomous weapons without human oversight.

The Pentagon pushed for approval to use the model for “all lawful purposes”, which Anthropic would not agree to. In response, President Trump ordered US agencies to stop using Anthropic’s AI and labelled the company a supply chain risk, a term usually reserved for foreign adversaries, and never before applied to a US company.

Shortly after, OpenAI secured the Pentagon deal. While OpenAI says similar safety principles apply, the key difference is control: Anthropic wanted to enforce its own limits, while OpenAI agreed to let the US government decide what counts as lawful use.

Why it matters

This isn’t just a policy dispute. It’s a realworld test of who controls how advanced AI is used, and trust is becoming a differentiator rather than a nicetohave.

The situation highlights the tension between AI safety commitments and national security priorities. Anthropic tried to enforce its own limits, while OpenAI accepted a model where the state decides what counts as lawful use, raising ethical questions about how AI is deployed in defence and surveillance.

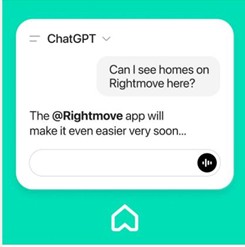

Public reaction has largely favoured Anthropic, with Claude climbing to the top of US app download charts after the news broke, while OpenAI’s Pentagon deal has triggered a visible backlash and calls online to reconsider use of ChatGPT.

For brands, platforms and agencies, this reinforces the importance of clear AI governance, transparency, and choosing partners whose values align with how you want to operate as AI scales.